On February 16, Apple posted a message to customers from CEO Tim Cook explaining why Apple does not plan to assist the FBI with breaking the passcode on San Bernadino shooter Syed Rizwan Farook’s iPhone.

On February 16, Apple posted a message to customers from CEO Tim Cook explaining why Apple does not plan to assist the FBI with breaking the passcode on San Bernadino shooter Syed Rizwan Farook’s iPhone.

“The United States government has demanded that Apple take an unprecedented step which threatens the security of our customers,” Cook writes. “We oppose this order, which has implications far beyond the legal case at hand.”

Let’s imagine that you were maintaining a timeline of significant modern data security events. Apple’s resounding “No” to the FBI on Tuesday would have to go on it.

What Does the FBI Want from Apple and Why?

For those that might need it, here’s a quick summary of what led us to this point.

- The FBI seized Farook’s work smartphone, an iPhone 5C, following the shooting. It then obtained a warrant to search the phone as well as permission to do so from San Bernardino County Department of Public Health, Farook’s employer.

- Like many iPhones, Farook’s device is protected by a passcode and is using a security feature that will delete all data on the phone if the incorrect passcode is provided more than 10 times. Behind the scenes, the key to the encrypted data would be destroyed.

- The FBI does not have the passcode and thus has obtained this order from a California district court to obtain Apple’s assistance.

- But the order doesn’t simply ask Apple to crack the passcode. It instead asks the company to provide a new, custom version of its iOS software the FBI can use to disable the security feature of this specific phone and access Farook’s data.

And this led to Cook’s strong response.

Tim Cook Defends Apple Encryption Standards

Tim Cook’s letter to customers not only establishes Apple’s position on the FBI’s request and the court order, but also serves as a “State of Data Security” from an acting CEO that succinctly summarizes the value an enterprise places on encryption and key management.

Encryption’s Importance:

In order to protect the sensitive data iPhone users generate and store on these devices, Cook states very clearly that Apple believes encryption and restricting the company’s access to customer data are the best solutions.

In order to protect the sensitive data iPhone users generate and store on these devices, Cook states very clearly that Apple believes encryption and restricting the company’s access to customer data are the best solutions.

“For many years, we have used encryption to protect our customers’ personal data because we believe it’s the only way to keep their information safe. We have even put that data out of our own reach, because we believe the contents of your iPhone are none of our business.”

No Encryption Backdoors:

Apple claims it has been very supportive of FBI investigations – including this one – and has to date:

- Provided data in Apple’s possession

- Complied with valid subpoenas and search warrants

- Made Apple engineers available as advisors

- Provided suggestions regarding investigative options

So what’s the reason for non-compliance this time?

Cook argues that creating a new version of the iPhone operating system, as ordered, would create a way to circumvent the security features built into his company’s products to access encrypted data – a Pandora’s Box that Apple doesn’t want to build or open.

“Building a version of iOS that bypasses security in this way would undeniably create a backdoor. And while the government may argue that its use would be limited to this case, there is no way to guarantee such control.”

Keys to the Kingdom:

Cook also points out that encrypted systems and data can’t remain secure if the key required to unlock it is exposed. He argues that complying with the order would undermine customer data protection.

“For years, cryptologists and national security experts have been warning against weakening encryption. Doing so would hurt only the well-meaning and law-abiding citizens who rely on companies like Apple to protect their data. Criminals and bad actors will still encrypt, using tools that are readily available to them.”

Establishing a Data Security Precedent

While the significance of Cook’s defense of Apple’s encryption and key management practices can’t be understated, the legal ramifications of this situation are equally important.

“We can find no precedent for an American company being forced to expose its customers to a greater risk of attack,” Cook noted in his letter. We may be about to see a new precedent set.

He goes on to note that “the FBI is proposing an unprecedented use of the All Writs Act of 1789 to justify an expansion of its authority.” But what is the All Writs Act of 1789?

The writ is in essence a catch-all legal order created during the first session of United States Congress. It is being invoked here because there is no existing law that explicitly states a technology company can be compelled to create new software that could potentially undermine its data security, or that of its customers, to help with a law enforcement investigation.

The writ is in essence a catch-all legal order created during the first session of United States Congress. It is being invoked here because there is no existing law that explicitly states a technology company can be compelled to create new software that could potentially undermine its data security, or that of its customers, to help with a law enforcement investigation.

Will using the All Writs Act for this purpose work? It will likely depend on how the last paragraph in the court order can be interpreted:

“To the extent that Apple believes that compliance with this Order would be unreasonably burdensome, it may make an application to this Court for relief within five business days of receipt of the Order.”

So the question becomes: can Apple successfully argue that creating this brand new software – a backdoor to its own encryption features that could be exploited, as Cook describes it – is by definition “unreasonably burdensome?”

I think we’re about to find out.

As Cook writes, “We believe it would be in the best interest of everyone to step back and consider the implications.”

It will be interesting to see if a “step back” is really all it takes to more clearly define where data security stops and national security starts in today’s digital world.

Public Perception and Unlikely Allies

If you’re like me, you likely expected the San Bernardino tragedy to prompt new debates in America around the topics of gun control and terrorism, and rightfully so.

However, what I think few foresaw was that this would be the impetus for a new cyber security battle between the FBI and the world’s most valuable brand.

While we wait to see if Apple can successfully defend its challenge of the Farook case court order, we are seeing people and organizations usually on different sides of most arguments shockingly coming down on the same side in this debate.

Unsurprisingly, Edward Snowden has publicly sided with Apple:

The @FBI is creating a world where citizens rely on #Apple to defend their rights, rather than the other way around. https://t.co/vdjB6CuB7k

— Edward Snowden (@Snowden) February 17, 2016

A little more unexpected though, General Michael Hayden, former Director of the National Security Agency, likewise sided with Apple, saying:

“I disagree with [FBI director] Jim Comey. I actually think end-to-end encryption is good for America. …I know encryption represents a particular challenge for the FBI. But on balance, I actually think it creates greater security for the American people than the alternative: a backdoor.”

Meanwhile, the White House and presidential candidate Donald Trump have apparently found something they can agree on. Trump weighed in, stating:

“Apple, this is one case, this is a case that certainly we should be able to get into the phone and we should find out what happened, why it happened, and maybe there’s other people involved and we have to do that.”

And White House press secretary Josh Earnest echoed support of the court order, telling reporters that the FBI is “simply asking for something that would have an impact on this one device.”

Not so, say Google and Microsoft, two of Apple’s fiercest competitors. Both organizations released statements siding with Cook’s position.

Taking to Twitter, Google CEO Sundar Pichai wrote:

“Important post by @tim_cook. Forcing companies to enable hacking could compromise users’ privacy.

“We know that law enforcement and intelligence agencies face significant challenges in protecting the public against crime and terrorism.

“We build secure product to keep your information safe and we give law enforcement access to data based on valid legal orders. ”

But that’s wholly different than requiring companies to enable hacking of customer devices & data. Could be a troubling precedent.

“Looking forward to a thoughtful and open discussion on this important issue.”

As are we.

But what about the majority of Americans? Where do they stand?

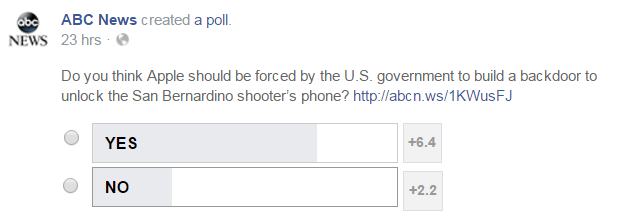

There are many polls underway right now to assess public sentiment, but an ABC News Facebook poll — as of 8 a.m. EST on February 18, 2016 — found that 74% of Americans say they favor forcing Apple to comply with the court order.

Where do you stand? Let us know on Twitter via @GemaltoSecurity .

The Enterprise Takeaway

Only time will tell if Apple will be able to defend itself against this latest court order, but with data security back in the headlines, this is yet another opportunity for IT and security professionals to take a seat at the corporate table.

Your enterprise needs a unified plan for protecting enterprise data assets. As your sensitive data is stored, processed, and shared outside of your control, you need a way to keep it safe wherever it goes, including today’s cloud-enabled environments.

If you don’t have a plan to secure your data, here are three steps you need to take to protect your information now:

First, locate your sensitive data.

Conduct an audit to identify where your most sensitive assets reside. This includes on premises data centers, as well as extending data centers (virtual, private and public cloud).

Conduct an audit to identify where your most sensitive assets reside. This includes on premises data centers, as well as extending data centers (virtual, private and public cloud).

Audit your storage and file servers, applications, databases, and virtual machines, and also remember the data that’s flowing across your network and between data centers.

Next, encrypt it.

An encryption solution should meet two key objectives. First, it should protect the data directly, and also allow you to define who and what can access the data once it is encrypted.

This solution should be applied to both data in motion across your network and infrastructure and data at rest at every layer of the technology stack across your distributed infrastructure.

Finally, own and manage your keys.

Manage and store your keys centrally, yet separate from the data, to maintain control and ownership of both the encrypted information and the keys.

Be sure that the solution you choose provides comprehensive logging and auditing capabilities so you can easily report access to protected data and keys, particularly if you have internal or regulatory compliance obligations.

To learn more about enterprise encryption and key management best practices, download our eBook.